In microservice architectures, where services frequently communicate with each other, the latency of network requests sent via the loopback (lo) interface can significantly impact application performance. These requests traverse the local network stack at least twice, potentially creating a performance bottleneck, especially when handling a high volume of concurrent requests.

In this week newsletter, we will discuss how eBPF sockops programs tackle this issue by enabling direct packet transfer between sockets on the same machine. This approach reduces CPU overhead and minimizes the latency associated with packet forwarding within the TCP/IP stack.

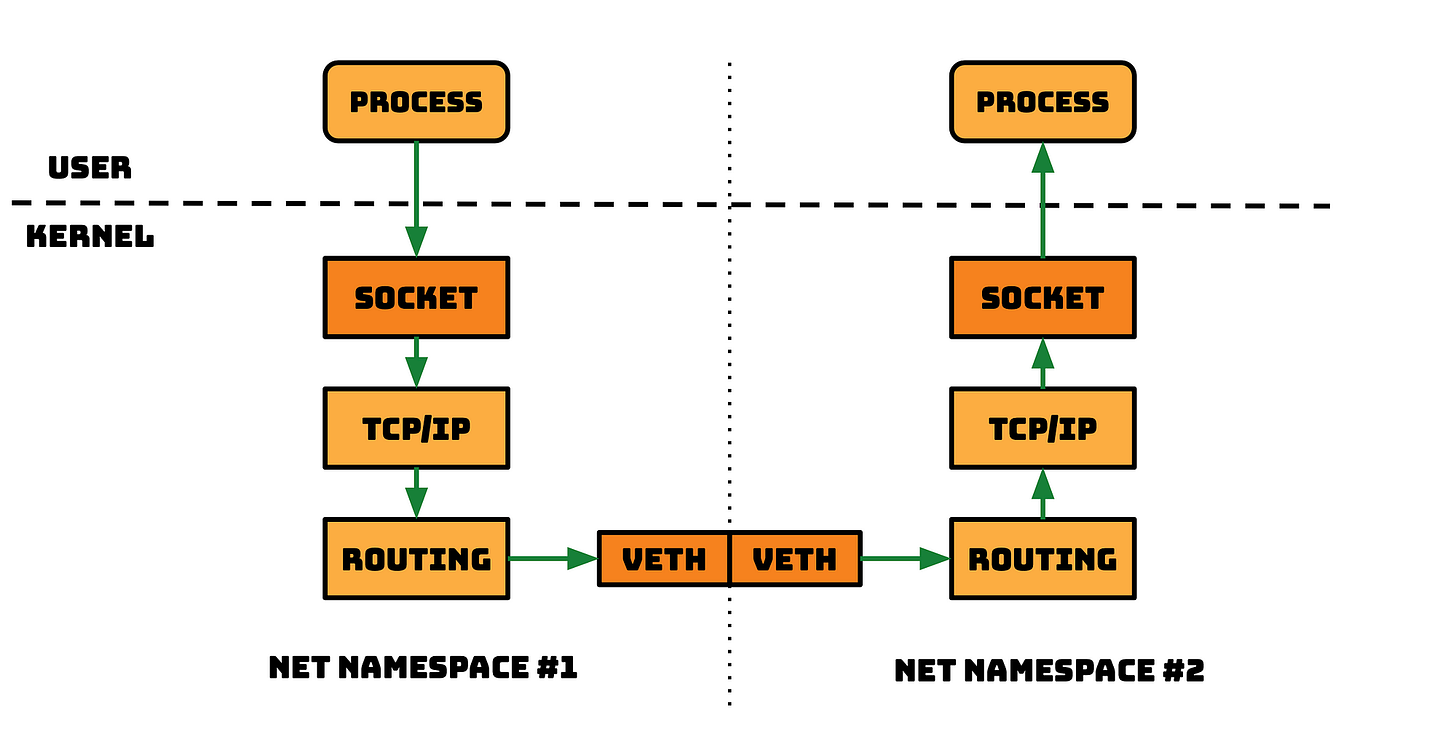

The following image depicts a scenario in which two processes from two different network namespaces on the same host are communicating. The same holds true for a single network namespace, as a packet needs to traverse down and back up through the TCP/IP stack.

We encounter numerous instances of such scenarios, though the overhead becomes particularly noticeable in high-performance applications.

Take, for instance, two Kubernetes pods communicating with each other. This illustrates the precise route a packet takes when moving from one pod to another. Cilium has discovered an effective method to alleviate this overhead by employing socket splicing with SockMap, leveraging eBPF.

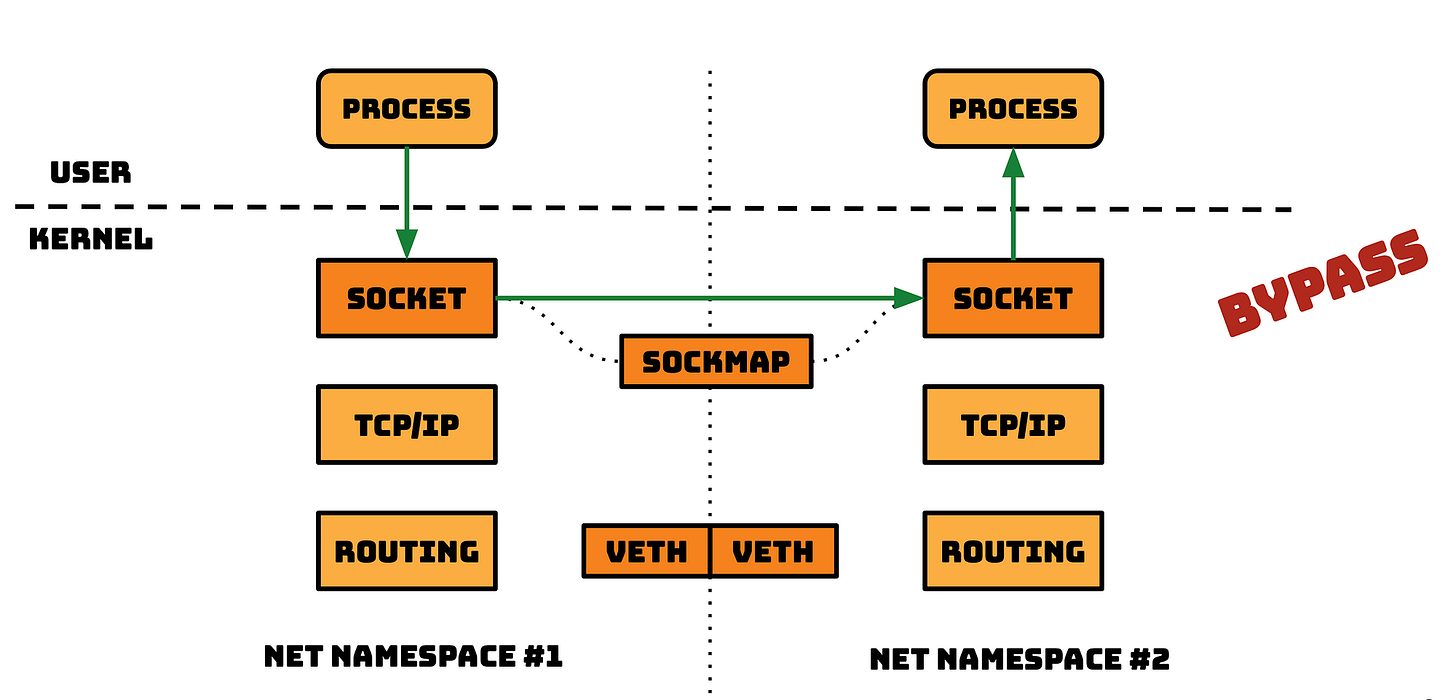

eBPF SockMap

⚠️ Note: Instead of delving into the complexities of a Kubernetes setup and containers, we are showcasing this concept using two processes. However, it’s important to note that the same principles apply.

In high-level overview, essentially what happens is that anytime a socket in the network namespace #1 wants to send a message to the socket laying in network namespace #2 (on the same host), there’s a eBPF program attached to a cgroups hook that triggers on sendmsg() syscall. This instead of forwarding the packet down and up the network stack as seen above, redirects it directly to the receiving socket reducing the overhead.

I think you get the idea.

To be more precise, there are two eBPF programs that enable this, which communicate through a Sockmap eBPF map:

sk_msghook: This program is attached to a Sockmap eBPF Map file descriptor and invoked when a sendmsg() or sendfile() syscalls are executed ONLY on sockets residing in that same eBPF map. This enables message redirection, avoiding the bottleneck of the local network stack.sockopshook: This program is called whenever there’s a socket operation on a particular cgroup (retransmit timeout, connection establishment, etc.) to insert new socket objects into the eBPF Map.

⁉️ Why cgroups hook point?

Linux sockets, processes, and other systemd units are hierarchically organized into cgroups. Limiting this program to a specific cgroup is a valuable feature, as it allows us to easily target and reorganize the processes that the program should affect.

Code Example

I find code example renders in Substack tedious, so I’ll refer to my GitHub repository with the code and test results.

Here’s the link.

💡 Make sure you check the code comments :)

I hope you find this resource as enlightening as I did. Stay tuned for more exciting developments and updates in the world of eBPF in next week's newsletter.

Until then, keep 🐝-ing!

Warm regards, Teodor